In the early 2020s, vector databases were the “new kids on the block”—a niche requirement for specialized machine learning teams. Fast forward to 2026, and they have become as fundamental to the modern tech stack as PostgreSQL or Redis. If you are building with LLMs, autonomous agents, or multimodal AI, you aren’t just managing data anymore; you’re managing meaning.

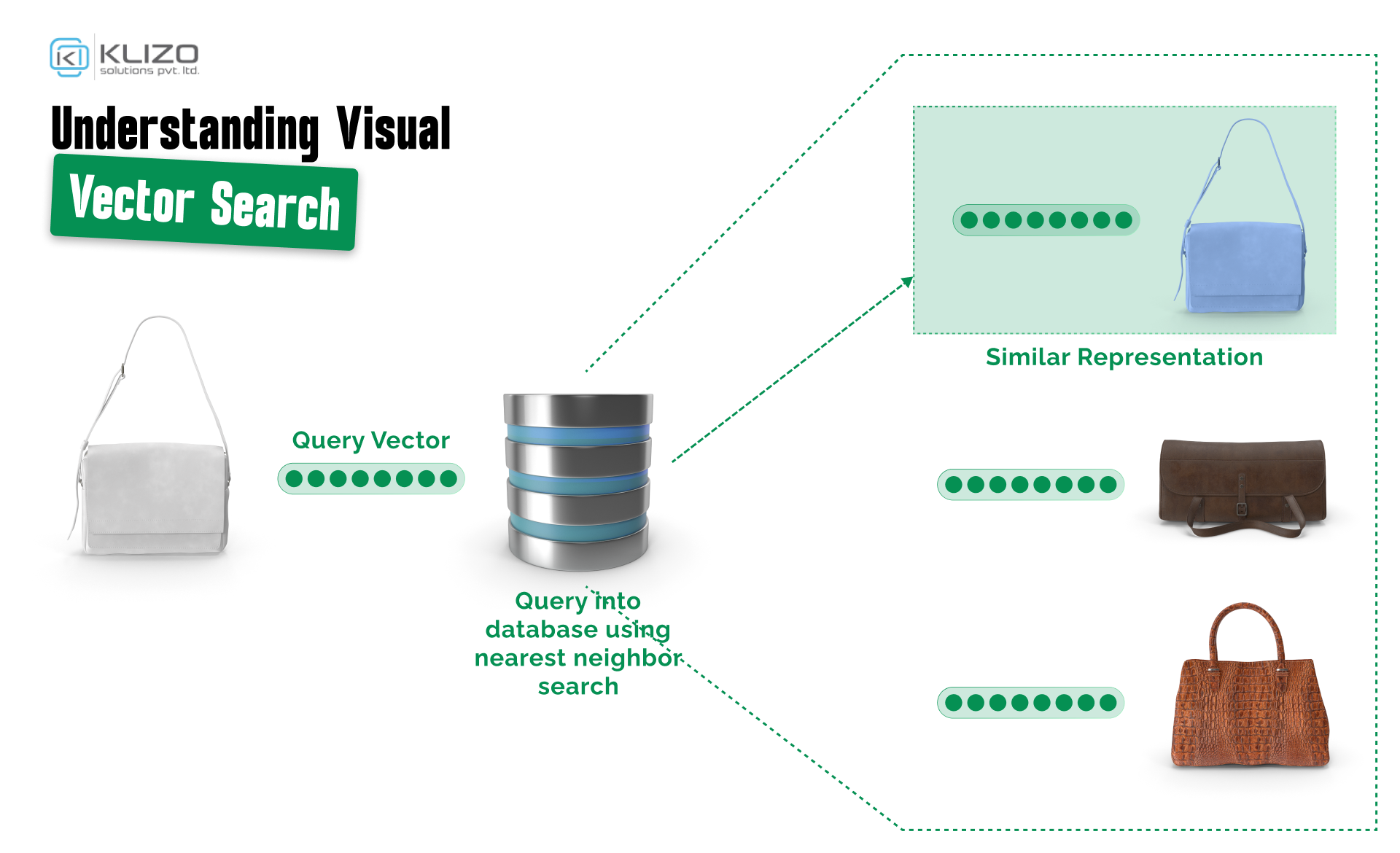

Traditional databases are great for answering “What is the price of item X?” but they fail miserably at “Find me products that feel like a summer breeze.” This shift from exact matching to semantic similarity is why vector databases are now a multi-billion dollar industry.

A vector database is a system purpose-built to store, index, and query data as high-dimensional embeddings — dense numerical representations of unstructured data like text, images, audio, and code.

Unlike relational databases that store rows and columns, vector databases store the mathematical meaning of data. Whether it’s a paragraph, a 4K video clip, or a user’s purchase history, an embedding model converts it into a fixed-length array of floats:

[0.12, -0.59, 0.88, ..., 0.04]

In a 1536-dimensional space (standard for many 2026 embedding models), the vector for “Smartphone” sits mathematically closer to “Android” than to “Banana.” This is the core principle that makes semantic search engineering possible — and it’s why vector databases for developers have become as essential as PostgreSQL or Redis.

For decades, we relied on Inverted Indexes (the tech behind Elasticsearch). If you searched for “crimson footwear,” and the database only had “red shoes,” you got zero results.

Vector databases solve this via Semantic Search.

By 2026, we’ve reached a point where Hybrid Search is the gold standard. Modern developers use a combination of BM25 (keyword) and vector search to ensure that results are both semantically relevant and contain the specific technical terms required.

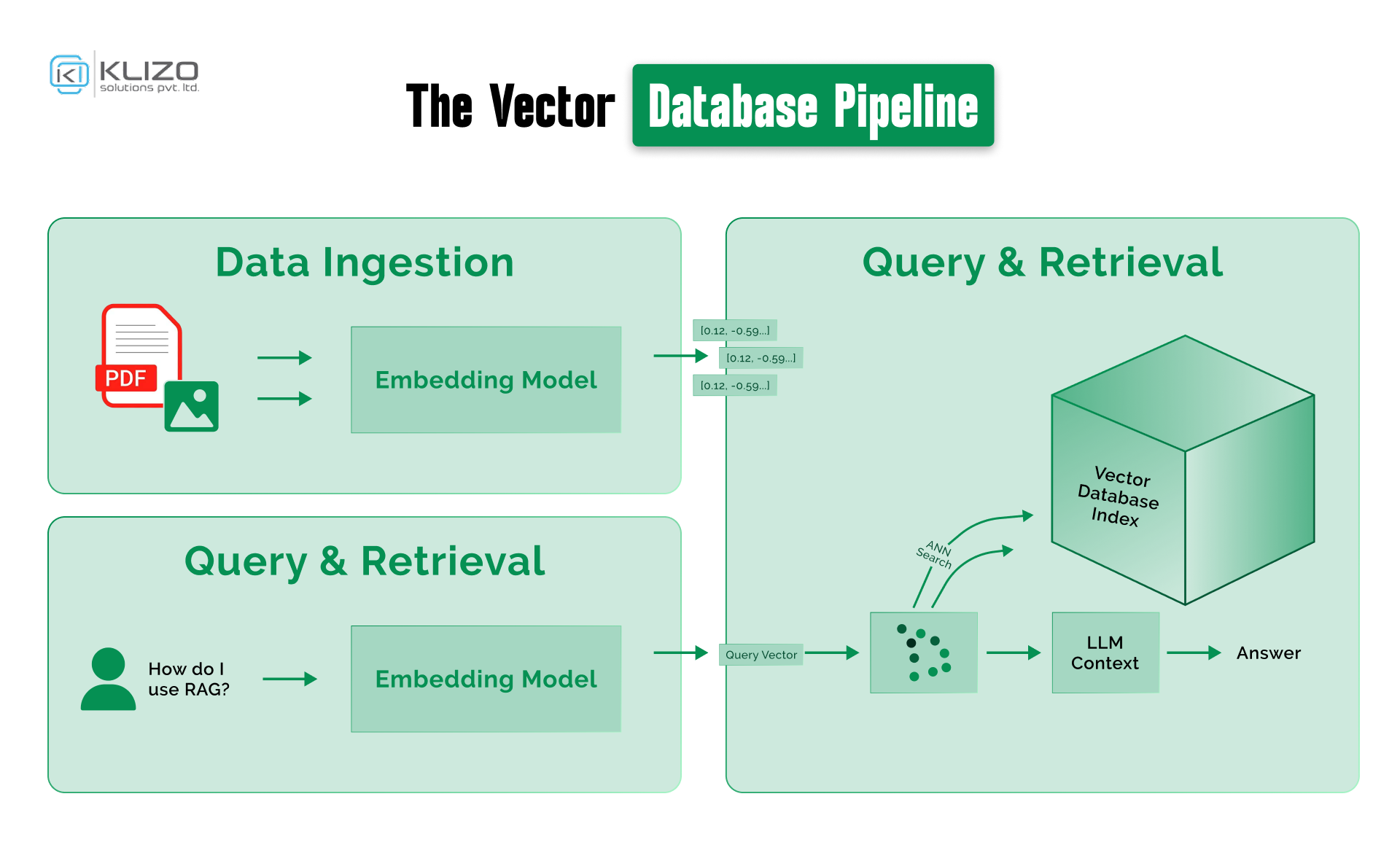

Building a production-ready AI application on vector databases requires understanding the full data pipeline — it’s not just “insert and query.”

The Vector Data Pipeline:

text-embedding-3, open-source BGE-M3, or Cohere’s embed-v4) converts data into high-dimensional embeddings.This pipeline is the backbone of every RAG system, recommendation engine, and semantic search application in 2026.

You can’t run a linear scan across 100 million vectors — it would take seconds, an eternity at production scale. Instead, developers use Approximate Nearest Neighbor (ANN) algorithms.

HNSW builds a multi-layered graph: top layers have fewer nodes for fast navigation, bottom layers contain all points for precision.

By 2026, DiskANN has become the go-to for trillion-scale datasets. It stores the bulk of the index on SSDs instead of expensive RAM, making scaling vector databases for trillions of embeddings financially viable.

Think of PQ as “vector compression.” It reduces vector size so you can fit more data into memory, trading a slight accuracy dip for major cost savings.

Bottom line on HNSW vs DiskANN: Use HNSW for low-latency, high-recall workloads under ~10M vectors. Use DiskANN when scaling beyond that or when RAM budget is a constraint.

The most common use case for vector databases for developers in 2026 is Retrieval-Augmented Generation (RAG). LLMs have knowledge cutoffs and limited context windows. A vector database acts as the model’s AI long-term memory — an external knowledge store that keeps responses accurate and current.

How RAG architecture works:

Example: An AI support bot for a hardware company doesn’t know about yesterday’s manual update. With RAG, the bot queries the vector database for the latest manual sections and feeds that context to the LLM — no retraining required.

Pro tip: For production RAG, always implement metadata filtering (e.g., filter by date, document type, or tenant ID) alongside vector similarity to improve precision.

Vector databases are powerful, but watch out for these production gotchas:

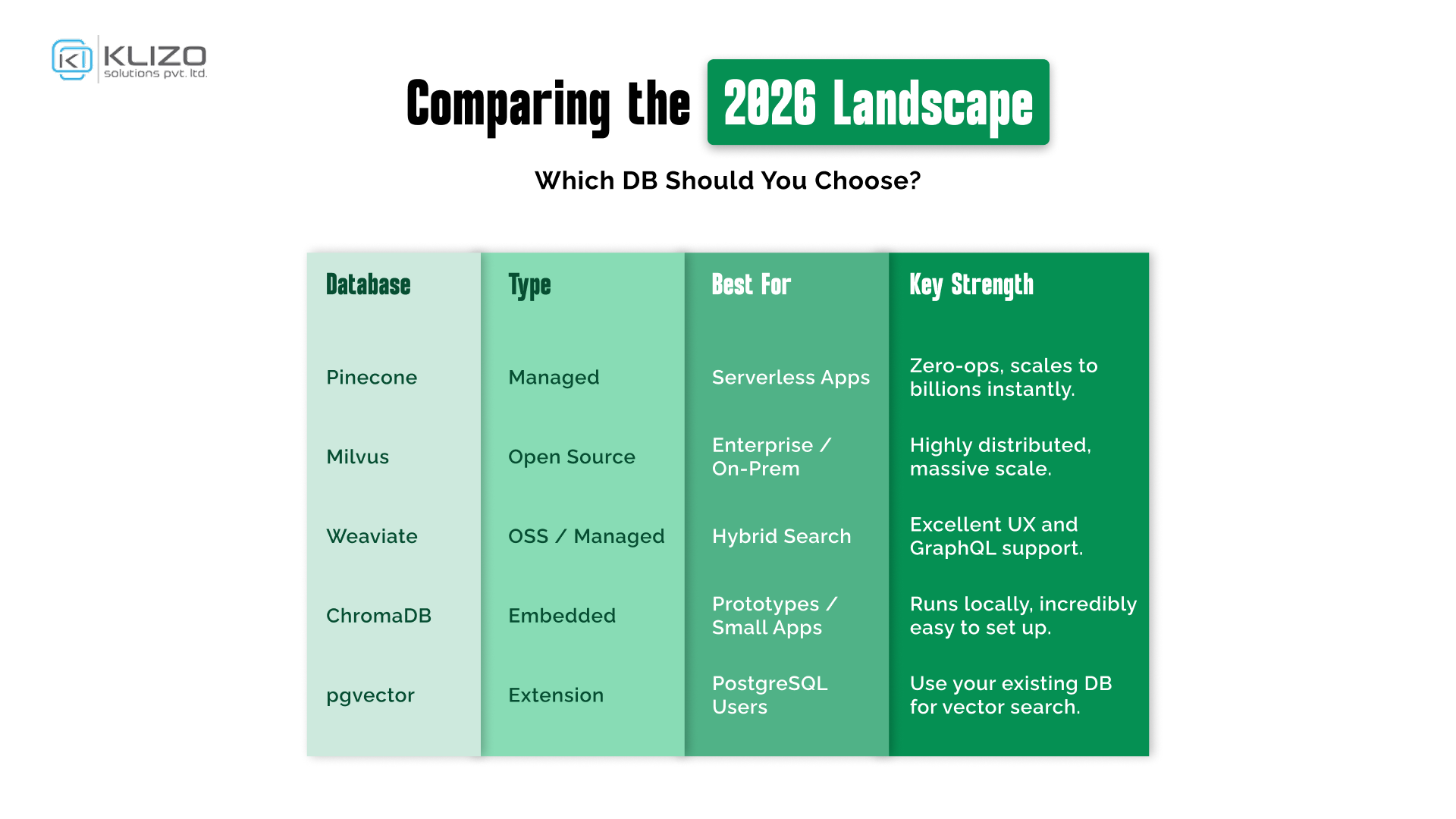

How do I choose a vector database for RAG?

Start by evaluating your scale (thousands vs billions of vectors), infrastructure (cloud vs on-prem), and whether you need hybrid search. For most RAG applications, Pinecone or Weaviate offer the fastest path to production. If you’re already on PostgreSQL, pgvector is a strong starting point.

What’s the difference between vector search and keyword search?

Keyword search matches exact or fuzzy strings. Vector search matches semantic meaning — so “crimson footwear” finds “red shoes.” Most production systems in 2026 use hybrid search combining both.

Is pgvector good enough for production?

Yes — pgvector performance has improved significantly with HNSW index support. For datasets under ~5M vectors with moderate query load, it’s a solid choice that avoids adding new infrastructure.

How do you scale vector databases for trillions of embeddings?

Use DiskANN-based indexing (supported by Milvus and Microsoft’s proprietary solutions) to store indexes on SSD rather than RAM. Combine with sharding and tiered storage strategies.

What is AI long-term memory?

It refers to using a vector database as an external knowledge store for LLMs — enabling them to “remember” information beyond their training data and context window, typically via RAG architecture.

We are moving beyond text. The next frontier for developers is Multi-modal Vector Databases, where a single search can span images, audio, and code. As an engineer, mastering the vector stack isn’t just a resume-builder; it’s the price of entry for the AI era.

Ready to start? If you’re looking for deep-dive tutorials on AI infrastructure, check out our Klizos Tech Blog for more insights. For official documentation on the latest indexing benchmarks, visit the ANN-Benchmarks repository.

Joey Ricard

Klizo Solutions was founded by Joseph Ricard, a serial entrepreneur from America who has spent over ten years working in India, developing innovative tech solutions, building good teams, and admirable processes. And today, he has a team of over 50 super-talented people with him and various high-level technologies developed in multiple frameworks to his credit.

Newsletter

Subscribe to our newsletter to get the latest tech updates.

Thanks for subscribing. We'll send the latest tech updates to your inbox.